Student Innovation Challenge

This year, 7 teams from around the world participated in the student innovation challenge, using haptic technology in new, creative ways to solve real-world problems. At the conference, the teams will compete for $2000 in awards. Our panel of judges will choose both a first place and runner up in the challenge:

- Mounia Ziat, Northern Michigan University

- David Birnbaum, Immersion, Inc.

- Evren Samur, Boğaziçi University

The People’s Choice award will be decided by conference attendees. Be sure to visit the challenge during the demo sessions and vote for your favourite! You can find project descriptions below.

The Student Innovation Challenge is sponsored by Disney Research, the IEEE Technical Committee on Haptics (TCH), and Google ATAP.

- Real-time haptic enhanced tele-rehabilitation system for physical therapy

Cristina Ramirez Fernandez, Nirvana Estivalis Green Morales, David Bonilla Castillo, Oliver Pabloff Angeles Abstract:Nowadays, mainly due to a combination of demographic changes, a lack of resources in the field of Public Health and technology improvements, the development of new rehabilitative practices seems mandatory in order to build sustainable models for rehabilitation from the clinical, organizational and economic perspectives. In this sense, aiming at providing remote rehabilitation to patients we propose a real-time haptic enhanced tele-rehabilitation system for physical therapy. The combination of virtual environment, the Vybe haptic gaming device and the Leap Motion gesture controller are used as tools to perform physical therapies. Firstly, the input parameters of the therapy are individualized and calibrated according to the patient characteristics. Secondly, the execution of the therapy depends on the therapist’s hands movements in the virtual environment, which generate multimodal feedback to patients (i.e. visual, vibrotactile and audible feedback). Finally, therapy results are automatically updated in the patient clinical record.

Abstract:Nowadays, mainly due to a combination of demographic changes, a lack of resources in the field of Public Health and technology improvements, the development of new rehabilitative practices seems mandatory in order to build sustainable models for rehabilitation from the clinical, organizational and economic perspectives. In this sense, aiming at providing remote rehabilitation to patients we propose a real-time haptic enhanced tele-rehabilitation system for physical therapy. The combination of virtual environment, the Vybe haptic gaming device and the Leap Motion gesture controller are used as tools to perform physical therapies. Firstly, the input parameters of the therapy are individualized and calibrated according to the patient characteristics. Secondly, the execution of the therapy depends on the therapist’s hands movements in the virtual environment, which generate multimodal feedback to patients (i.e. visual, vibrotactile and audible feedback). Finally, therapy results are automatically updated in the patient clinical record.

- Tele Teku-Teku

Daniel Gongora, Hideto Takenouchi, Wenchao Gu, Yoshihiro Kato Abstract:Tele Teku-Teku is a shared walking experience. It is a system designed for friends wanting to go for a stroll together but unable to do so because one of them is constrained to a certain place. We use the Vybe haptic gaming pad to provide vibrotactile feedback in sync with the footsteps of a distant friend. One of the users wears vibration sensors and carries an avatar robot equipped with an IMU and a camera, the other wears a Head-Mounted Display and sits on a chair enhanced by the gaming pad. Together these technologies set the stage for a rich and engaging experience. Shall we walk?

Abstract:Tele Teku-Teku is a shared walking experience. It is a system designed for friends wanting to go for a stroll together but unable to do so because one of them is constrained to a certain place. We use the Vybe haptic gaming pad to provide vibrotactile feedback in sync with the footsteps of a distant friend. One of the users wears vibration sensors and carries an avatar robot equipped with an IMU and a camera, the other wears a Head-Mounted Display and sits on a chair enhanced by the gaming pad. Together these technologies set the stage for a rich and engaging experience. Shall we walk?

- DriPad

Tianxiang Chen, Yilin Liu, Xinqian Xiang, Wenxuan Tang Abstract:In order to make fast and acute action, a driver needs a fusion of multiple senses including vision, hearing and sense of vibration. Utilizing the “Surround Haptics” of Vybe gaming pad, we proposed “DriPad”, a prototype of a haptic feedback pad that augments the sense of vibration of the driver. Applications such as direction prompt during navigation, following distance feedback, and drowsy driving alert. The kit should be integrated with a device that supports vehicle navigation and other sensors that detect the status of the vehicle or driver. DriPad is desired to be a haptic feedback device that improves navigation experience and driving safety.

Abstract:In order to make fast and acute action, a driver needs a fusion of multiple senses including vision, hearing and sense of vibration. Utilizing the “Surround Haptics” of Vybe gaming pad, we proposed “DriPad”, a prototype of a haptic feedback pad that augments the sense of vibration of the driver. Applications such as direction prompt during navigation, following distance feedback, and drowsy driving alert. The kit should be integrated with a device that supports vehicle navigation and other sensors that detect the status of the vehicle or driver. DriPad is desired to be a haptic feedback device that improves navigation experience and driving safety.

- HapticSense

Henry Li, Matthew Chun Abstract:Automated driving promises safer, efficient and comfortable driving experiences through changes in current driving conventions. As a motivating example, following distances between vehicles can be greatly minimized in order to increase efficiency and traffic throughput without compromising passenger safety through advanced control. However, towards the ideal fully automated street, there are potential situations when automated driving may not be possible. It is paramount that drivers accustomed to autonomous vehicles are able to conduct themselves safely when resuming manual control. We explore various haptic cues and their efficacy in assisting drivers to piloting their autonomous cars in a safe manner when required to assume manual control.

Abstract:Automated driving promises safer, efficient and comfortable driving experiences through changes in current driving conventions. As a motivating example, following distances between vehicles can be greatly minimized in order to increase efficiency and traffic throughput without compromising passenger safety through advanced control. However, towards the ideal fully automated street, there are potential situations when automated driving may not be possible. It is paramount that drivers accustomed to autonomous vehicles are able to conduct themselves safely when resuming manual control. We explore various haptic cues and their efficacy in assisting drivers to piloting their autonomous cars in a safe manner when required to assume manual control.

- Intelligent Driver Seat

Rachel van Berlo, Frank van Valkenhoef Abstract:The future of autonomous cars is soon to become reality. Within the transition from regular to autonomous cars lies a challenge: how to reengage passengers with traffic when they need or want to take over the control of the car. As the car is conscious of its surroundings it can continuously transfer the overload of visual information into a simplified vibrotactile signal to the passenger notifying the passenger of the most significant changes and important aspects of the current situation. This allows for a faster and safer interchange between passive and active participation in traffic and support the level of trust in the car’s intelligence. Independent of the passenger’s activity the seat remains one of the certain points of contact between the car and the passengers. Therefore the seat is highly suitable to transfer information.

Abstract:The future of autonomous cars is soon to become reality. Within the transition from regular to autonomous cars lies a challenge: how to reengage passengers with traffic when they need or want to take over the control of the car. As the car is conscious of its surroundings it can continuously transfer the overload of visual information into a simplified vibrotactile signal to the passenger notifying the passenger of the most significant changes and important aspects of the current situation. This allows for a faster and safer interchange between passive and active participation in traffic and support the level of trust in the car’s intelligence. Independent of the passenger’s activity the seat remains one of the certain points of contact between the car and the passengers. Therefore the seat is highly suitable to transfer information.

- Listen to your Heart

Anıl Ozen, Mustafa Ozan Ozen Abstract:Meditation is an effective method to clear mind renew the mind of a person. However, it would be a trouble to get into state of mind for meditation during a small break. In this system, user puts on their headphone, plugs in the heart pulse sensor to their finger, remains on their working chair which already has Vybe haptic gaming pad, and closes his or her eyes. The system greets the user with a soothing audio and gives commands on breathing. During the session each heart pulse is caught by the sensor and user receives a heart beat sound on headphone, as well as vibration from the chair to match the each beat. Depending on the heart beat speed the user is given command to breath slower and deeper or keep up the pace. After 10 minutes the user is meditated and is ready for working again.

Abstract:Meditation is an effective method to clear mind renew the mind of a person. However, it would be a trouble to get into state of mind for meditation during a small break. In this system, user puts on their headphone, plugs in the heart pulse sensor to their finger, remains on their working chair which already has Vybe haptic gaming pad, and closes his or her eyes. The system greets the user with a soothing audio and gives commands on breathing. During the session each heart pulse is caught by the sensor and user receives a heart beat sound on headphone, as well as vibration from the chair to match the each beat. Depending on the heart beat speed the user is given command to breath slower and deeper or keep up the pace. After 10 minutes the user is meditated and is ready for working again.

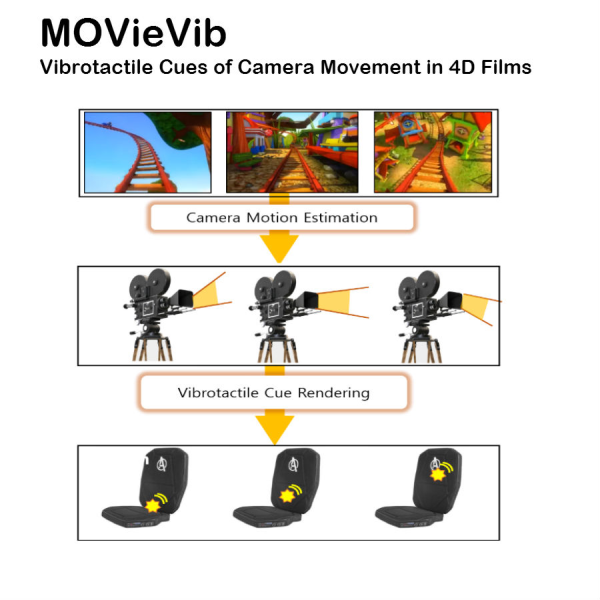

- MOVieVib: Vibrotactile Cues of Camera Movement in 4D Films

Jongman Seo, Jaebong Lee, Junsuk Park Abstract:We propose the MOVieVib: an automatic vibrotactile feedback generation system of camera movement in 4D films. Directional cues of camera motions are provided with vibrations through this system by the Vybe which has a vibrotactile grid display and is relatively cheap. We will use the algorithm presented earlier that automatically synthesize motion effects responding to camera motion. We will use this camera estimation algorithm which is optimized to synthesizing plausible motion effects for viewers since the algorithm does not require reconstructing physically-exact camera motion. We can estimate angular velocity, linear velocity, and linear acceleration of a camera motion. Some of those parameters are considered to generate directional vibrotactile cues. These cues should be optimized for perceptually clear sensations to maximize the sense of immersiveness.

Abstract:We propose the MOVieVib: an automatic vibrotactile feedback generation system of camera movement in 4D films. Directional cues of camera motions are provided with vibrations through this system by the Vybe which has a vibrotactile grid display and is relatively cheap. We will use the algorithm presented earlier that automatically synthesize motion effects responding to camera motion. We will use this camera estimation algorithm which is optimized to synthesizing plausible motion effects for viewers since the algorithm does not require reconstructing physically-exact camera motion. We can estimate angular velocity, linear velocity, and linear acceleration of a camera motion. Some of those parameters are considered to generate directional vibrotactile cues. These cues should be optimized for perceptually clear sensations to maximize the sense of immersiveness.